What we did

- Built a time-indexed corpus of Beige Book narratives, CPI/payrolls/JOLTS releases, and survey data.

- Generated macro forecasting questions at each publication date using later prints as labels.

- Trained with RL against a calibration-aware reward.

- Benchmarked against GPT-5 on held-out Beige Book windows.

Example datapoint

A sample training example — question, source, and outcome-derived label.

Results

Benchmark comparisons against frontier models.

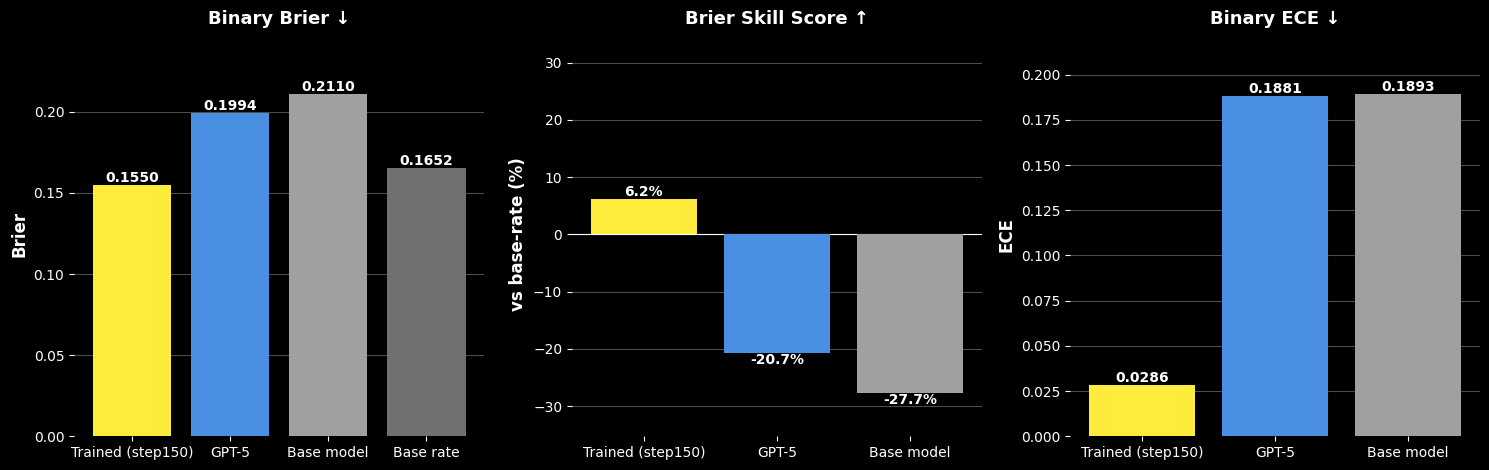

Better Accuracy, Skill, and Calibration vs. GPT-5

Trained on Fed Beige Book narratives, Foresight posts a Brier score of 0.155 vs. 0.199 for GPT-5 and 0.211 for the base model — a 22% reduction in error. It is the only model to beat the base rate (Brier Skill Score +6.2% vs. −20.7% for GPT-5 and −27.7% for the base model), and cuts calibration error (ECE) by ~6× vs. GPT-5.

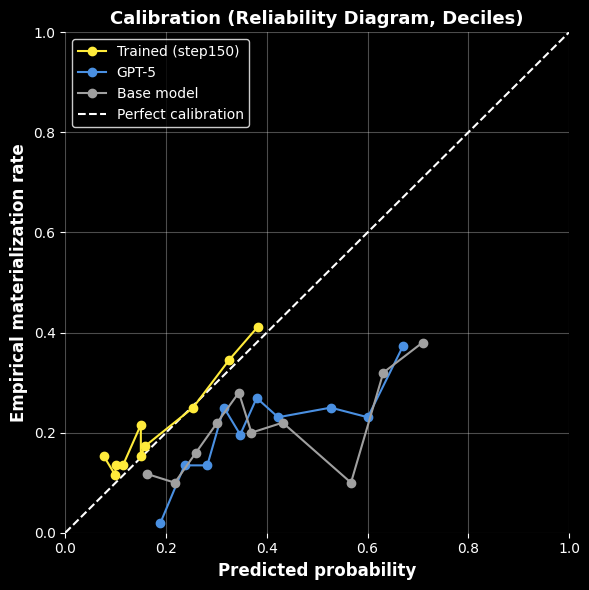

Probabilities That Match Reality — Unlike GPT-5

Foresight (yellow) hugs the perfect-calibration diagonal — when it says 30%, roughly 30% of events materialize. GPT-5 and the base model are systematically overconfident, drifting well below the line at higher probabilities.

Read more

Papers, models, datasets, notebooks, and write-ups for this case study.