What we did

- Scraped SEC EDGAR for 10-K, 10-Q, and 8-K filings with precise timestamps.

- Generated forward-looking risk questions anchored at each filing date.

- Labeled with actual downstream outcomes — enforcement actions, restatements, drawdowns.

- Fine-tuned Qwen3-32B and benchmarked on 6,109 held-out SEC risk queries.

Example datapoint

A sample training example — question, source, and outcome-derived label.

DATASET SEC risk signals

RL

Question

Will Northwind Logistics Inc. (NASDAQ: NWIN) face a Commission enforcement action within 12 months of the Feb 28, 2025 10-K disclosure of an informal revenue-recognition inquiry?

Question source

10-K risk factors Feb 28, 2025

Disclosure of ongoing informal inquiry related to revenue recognition policies

Label

Yes.

Type

binary

Confidence

Label source

SEC litigation release Nov 4, 2025

Commission orders cease-and-desist and civil penalty in accounting fraud matter

Results

Benchmark comparisons against frontier models.

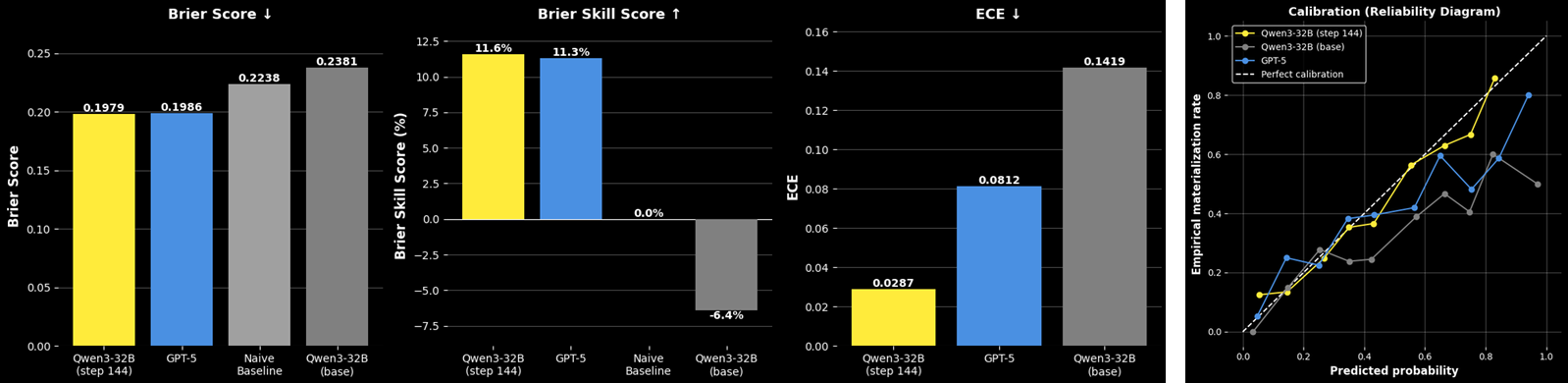

SEC Risk Prediction: Brier Score, Skill, and Calibration

Fine-tuned Qwen3-32B achieves Brier Skill Score +11.6% with ECE of 0.029 across 6,109 SEC risk queries — 64.7% lower calibration error than GPT-5 (ECE 0.081). The model learns to distinguish boilerplate legal language from meaningful signals preceding adverse outcomes.

Read more

Papers, models, datasets, notebooks, and write-ups for this case study.

Blog post blog.lightningrod.ai

SEC risk prediction: signal vs. noise

How to train a model to predict SEC enforcement from public filings

Notebook github.com

Document forecasting notebook

Advanced document processing with metadata filtering, RAG context generation, and temporal controls — ideal for SEC filings and financial reports

Dataset huggingface.co

SEC risk questions test set

Evaluation set of SEC filing questions with resolved outcomes